Research and publish the best content.

Get Started for FREE

Sign up with Facebook Sign up with X

I don't have a Facebook or a X account

Already have an account: Login

Your new post is loading... Your new post is loading...

Your new post is loading... Your new post is loading...

|

|

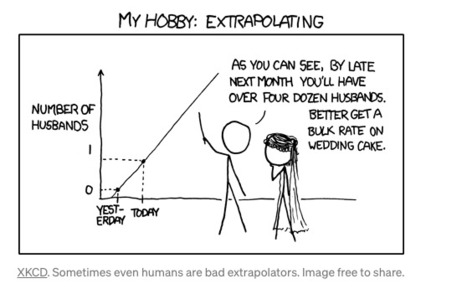

Recently presented this paper at NeurIPS 2022 workshop on distribution shifts. We demonstrate the higher robustness of implicit models on out of distribution data as compared to classical deep learning architectures (MLP, LSTM, Transformers and Google's Neural Arithmetic Logic Units). We speculate that implicit models, unrestricted in their layer number, can adapt and grow for more complex data.